*He’s actually a really good friend. I just thought “Pasadena reader Mike” would give the post a certain professional heft.

Casually refer to a movie's "Metascore" or "Tomatometer" percentage, and you might get an actual tomato thrown in your face. Movie ratings cause controversy, particularly when they're presented as "final" or "overall." I'm referring, of course, to the movie review aggregators Metacritic and Rotten Tomatoes, which compile dozens of scores from around the web to calculate one overall rating for each new film.

Many full-time movie fans ignore these scores. Representing a 2-hour work of art with a single, sterile number like "73" is cinematic sacrilege! I regard these individuals with equal parts skepticism and respect. On the one hand, I can't imagine how much free time they must have to see every piece of garbage that gets released, but I still respect the sentiment, in principle. After all, the most important opinion is your own.

For us lay folk, however, a single score is convenient, helpful, and sometimes, indispensable. There's little worse than shelling out $15 (or $30 on a date, or $60 with a family) to see Smurfs 2, only to discover that repeatedly wapping your head with the ticket turnstile would have made for a more pleasant evening. Sites like Rotten Tomatoes and Metacritic can give you the general consensus ahead of time. Unlike that Facebook recommendation from Stacey or the one-off ‘professional’ post from a guest reviewer for the Chicago Tribune, you'll rarely get bad advice after distilling 30+ opinions down to one. It's just math.**

**Okay, that's the single most pretentious thing I've ever written.

With all that said, here is my definitive guide to Rotten Tomatoes and Metacritic, complete with critiques, graphs, a scatterplot, and final recommendations. Get comfortable.

Part I - The Calculation Methods

The Tomatometer reflects the percentage of people who liked a given movie. Which people? Print publications, broadcast outlets, and online publications, each of which must maintain a certain level of traffic, quality, and consistency to be counted. (See the full explanation here.) For popular films, Rotten Tomatoes counts over 200 different reviewers. If a single percentage figure wasn't simple enough, Rotten Tomatoes categorizes every movie as either "Fresh" or "Rotten," based on whether the percentage is above or below 60%. Think high school: as long as you don't get an F, you pass.

Metacritic

The Metascore is not a percentage: it's a weighted average of scores from top critics, normalized on a scale from 0 to 100. Which critics? A prestigious group of 30-50 writers from the most recognizable names in the industry (New York Times, Wall Street Journal, Chicago Sun-Times, etc.). Metacritic editors must convert critics' scores to fit their model, with some methods more controversial than others. They convert four-stars ratings like you'd expect (3 out of 4 stars = 75/100), letter grades a bit differently than you might expect (a C+ gets a 58/100) and most subjectively, assign their own scores when the critic does not (for example, they might assign an 80 to a fairly positive review with a few reservations). Finally, Metacritic weights the critics’ review scores according to each publication's "quality and overall stature" (see the full explanation here). They refuse to disclose these weights, which is both pompous, and in my mind, smart.

Part II - Pros and Cons

Rotten Tomatoes

Pros

- Simple to understand. It's the percentage of people who liked the movie. This immediately makes sense to everyone.

- Objective. Outside of the occasional mixed review, it's pretty easy to tell whether a given reviewer liked or disliked a film. For Rotten Tomatoes, that's the only editorial involvement when calculating scores.

- Matches how many of us think about movies. The average consumer doesn't think, "yes, I've heard the movie is good, but HOW good?" She just wants to know if she should see it or not. Rotten Tomatoes provides the answer. See the "Fresh" movies; skip the "Rotten" ones.

- Good branding. Outside of IMDB, Rotten Tomatoes is the most popular movie site on the web. People recognize the name, which builds trust.

Cons

- Oversimplifies. Particularly with "Fresh" and "Rotten," Rotten Tomatoes sacrifices nuance in favor of simplicity.

- Advanced metrics are buried. Rotten Tomatoes apologists point to additional scores like "Top Critics" (includes only an elite group of ~40 top reviewers) when people like me complain about oversimplification. But with the big tomato icon displayed proudly at the top and the Top Critics score a tiny filter at the bottom of the page, most users won't bother with anything besides that overall score.

- Bloated. As much as Rotten Tomatoes attempts to filter out bad critics, their inclusive approach invariably allows a few lousy reviewers into the Tomatometer mix.

Metacritic

Pros

- Nuanced. Metacritic scores attempt to tell you just how good each movie is. A 97% on Rotten Tomatoes means almost everyone liked it, but it doesn't necessarily tell you how many people loved it. On Metacritic, it does.

- Reliable sources. Metacritic doesn't have any bad eggs—only the best of the best get their say. When it comes to critic admission standards, Rotten Tomatoes might be UCLA, but Metacritic is Harvard.

Cons

- Difficult to explain. The simpler something is, the more people tend to trust it. There's a reason "hope and change" beats a 160-page economic plan.

- So-so branding. Whenever I bring up Metacritic, I get one of three responses. The most popular: "What's that?" The second most popular: "Mealcritic?" The third most popular: "Ben: whatever you put in the microwave just exploded. Also, what's Mealcritic?"

- Subjectivity. No matter how many reviews Metacritic editors read, assigning one score based on the general thrust of a review will always be vulnerable to human error.

Pro or con?

- Snooty. As the web expands, social networks grow, and movie-watching methods multiply, we no longer have to rely on so-called Old Media for film recommendations. Metacritic sits back defiantly, sipping its whiskey and smoking its Old Media cigars. Obstinate, or genius?

Part III - Some Data Analysis

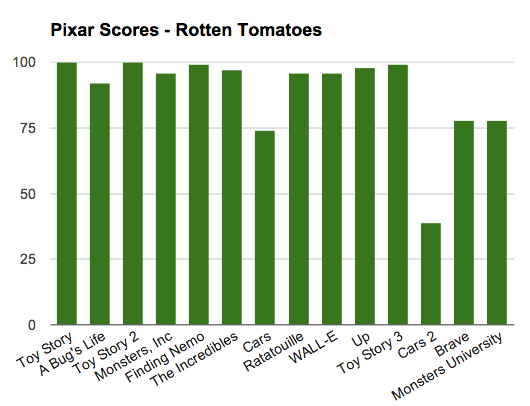

This certainly matches the prevailing narrative about Pixar: a top-tier animation studio who only stumbled once (Cars) before falling off more obviously in recent years.

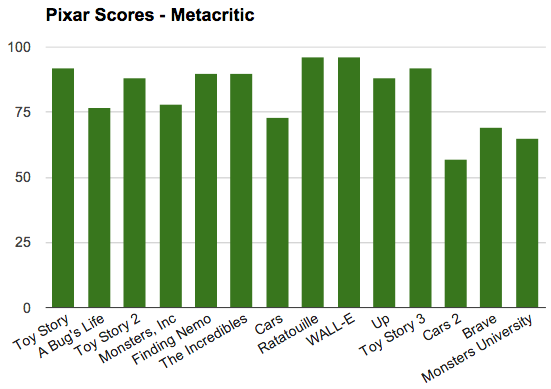

Now, take a look at Metacritic’s scores:

Just like Metacritic’s scoring methodology, it’s not nearly the clean, compelling narrative told by Rotten Tomatoes’ scores. Certainly, Buzzfeed and Bleacher Report would opt for the Tomatometer version. A simple narrative = more page views.

But I think Metacritic’s version is more true to life. In the second graph, we see the subtlety of human performance, with soft peaks and valleys, nothing perfect (Rotten Tomatoes rates four Pixar films at either 99 or 100; Metacritic’s Pixar scores peak at 96), and a Cars 2 rating with a more reasonable result (a mediocre film, but not historically terrible).

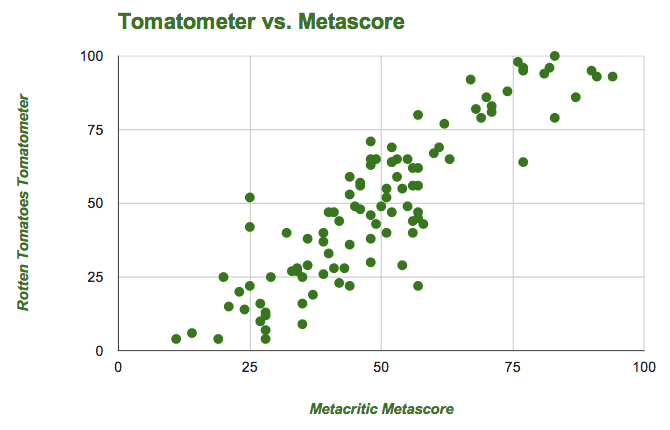

Perhaps Pixar is a special case. After all, we’re focusing on a studio with abnormally excellent movies. What about a random sampling of 100 recent releases?

At first blush, this is pretty boring news for Metacritic, where every other film receives a bland score between 40 and 60. Again, Rotten Tomatoes wins on sheer intrigue—load the homepage and you’ll immediately see several dazzlingly high and dizzyingly low scores. What fun!

Unfortunately, Rotten Tomatoes becomes a lot less interesting the more you think about its system. It’s all too easy for an inoffensive, family-friendly animated feature to earn a 95%+ overall score, simply because everyone generally liked it. Brilliant films like Zero Dark Thirty (93%) and Inglorious Basterds (88%) lose points for taking risks.

Worse, consider the number of perfect 100s on each site. Metacritic currently has ten total all-time (and eight of these are classic re-releases). Rotten Tomatoes has had 54 in 2013 alone. This is awful news for anyone who enjoys ranking the best films of all time. In any greatest- or worst-ever list, Rotten Tomatoes is practically worthless.

Part IV – Recommendations

You’ll prefer Rotten Tomatoes if you….

- See movies mostly on a whim

- Prefer to know how popular a film is, rather than how good it is

- Enjoy the quick stimulation of a shocking score

- Greatly enjoy grocery store produce

- Believe that historically great and historically awful movies are coming out on a near-weekly basis

You’ll prefer Metacritic if you…

- Demand knowing precisely how good a film is

- Have a lingering respect for Old Media

- Trust editors you don’t know to assign specific scores to word-only reviews

- Love making top 10 lists

- Acknowledge the boring fact that by and large, most movies are somewhere between “kinda bad” and “somewhat good”

RSS Feed

RSS Feed